Speaking of mistreating data, Roger Pielke Jr. has posted on his Substack the draft of a paper he submitted for review to a scientific journal on natural hazards, in which he argues forcefully that the latest version of the famous and highly effective clickbait “Billion Dollar Disaster” data set published by the National Oceanic and Atmospheric Administration (NOAA) fails to meet any of the data quality requirements set out by... NOAA. In fact, while it supposedly authoritatively measures the number of major climate-related disasters in the US each year, it fails any quality criteria you might mention and represents, Pielke Jr. writes, “an egregious failure of scientific integrity.” A government agency fudging the numbers to boost climate alarmism: insert shocked face here. Or angry one.

Pielke Jr. takes readers through the legal requirements binding on an agency like NOAA when it publishes an influential data base intended to be used for private and public sector decisions. Among other things, it must be constructed using a transparent methodology, must be accurate and must be relevant to what it’s used for. Pielke Jr. shows that the NOAA billion dollar baby fails on all counts.

First, no one knows how it is made. NOAA won’t say where they get their numbers from, specifically how they compute damage totals. Which is highly pertinent since Pielke Jr. uses the sample of Hurricane Idalia which hit Florida in September 2023, and whose total insured losses were reported at about $310 million. Even doubling that amount to account for uninsured losses only yields $620 million, yet NOAA declared the total to be $3.6 billion, with no explanation of how they arrived at that amount.

Pielke Jr. also notes that NOAA has quietly gone back through time and has added numerous disaster counts to earlier years, again with no explanation and without providing earlier versions of the data set for users to compare to. But perhaps the worst violation of scientific integrity is the fact that while NOAA does now adjust the data for inflation, it doesn’t adjust it for growth in the size of the economy.

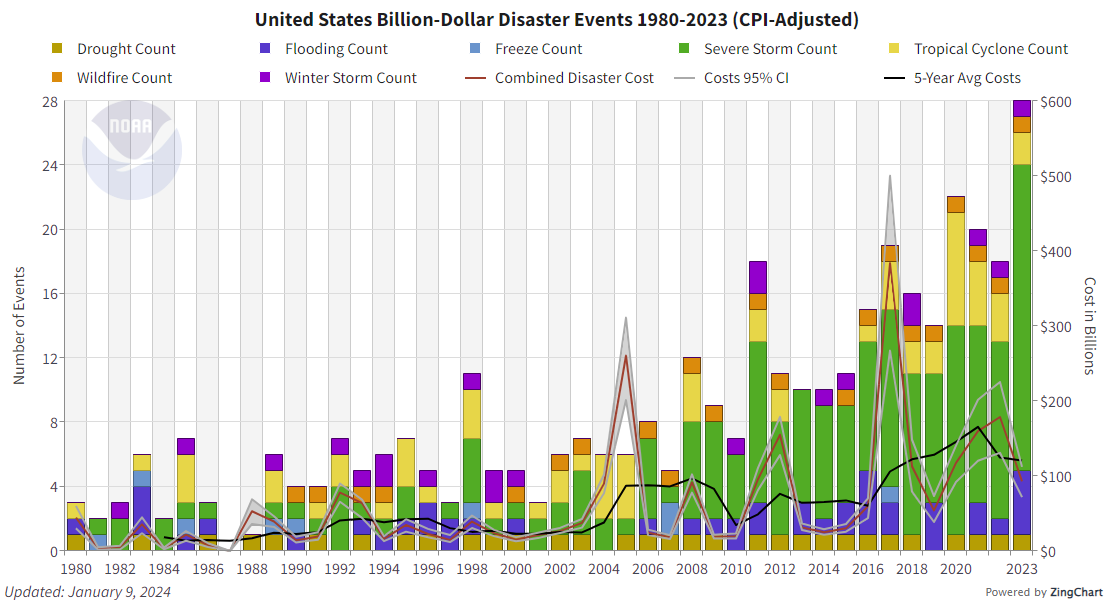

It’s an obvious one, with an obvious tendency to exaggerate the problem that no trained statistician could overlook. Since there are now more houses, roads, bridges and buildings in the path of a storm, and many of them are more valuable than those in existence in the area decades ago, even the same size storm today would do far more damage than it used to. By failing to “normalize” the economic data properly they get to draw an upward-sloping line:

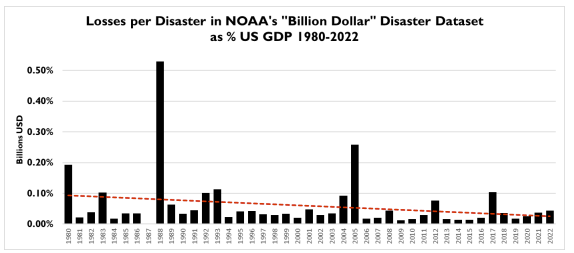

But this approach doesn’t measure the severity of the weather, it measures the size of the economy. The relevant number, and one that NOAA could easily generate, is the value of US disasters as a fraction of GDP, which is trending down, as this chart from Pielke Jr.’s paper shows:

By not normalizing the data properly, NOAA can claim the number of billion-dollar disasters is rising because the weather is getting worse, which means... climate change! Q.E.D. And then as Pielke notes, NOAA along with various unscrupulous politicians and journalists routinely use the rising number of billion dollar disasters as a measure of the severity of weather, when it is no such thing and they know it.

Even putting aside the proxy measure of losses as a share of GDP, there are direct measures of weather severity and they do not show an increasing trend. Which means the NOAA data set is inaccurate and irrelevant as a climate indicator.

To be sure, it has a dramatic name and the graph is scary. But not as scary as the thought that bureaucrats at NOAA are so willing to mislead the public to generate alarmist headlines and momentum behind massive, very harmful policies and… gosh… an increase in its budget to monitor the crisis.

And here is proof that these idiots are duplicitous liars and not naive true believers. They understand perfectly well that their data is fraudulent and they most hide their lies as best they can!

Rising insured property losses is often used as evidence of climate change. It has been used in Canada often. There was a report showing the increase in insurance losses for the province of Ontario (1983-2017), but when normalised for the increase in GDP there was no real change, only two anomalous years, 2005 and 2013.

Let's not forget insurance cos. either refusing to insure some properties or making the premiums so high that they are de facto,uninsurable.