Editor’s note: In this series University of Guelph professor Ross McKitrick offers his commentary on Unsettled: What Climate Science Tells Us, What it Doesn’t, and Why it Matters by Steven E. Koonin, physicist and former Obama Administration official. This week: Muddled models.

Being a physicist, Koonin notes the strange fact that there are about 40 major computer climate models in the world and they vary quite a bit in the results they produce. Why “being a physicist”? Because it means climate models aren’t “just physics” as some defenders claim. If they were we would only need one since all the models would come to the same conclusion. And the models don’t just disagree about future warming. They even disagree about the current average temperature, with the range spanning about 3°C. That’s three times the observed warming of the 20th century that the models purport to be able to explain. Which doesn’t add up. And as Koonin dug further into the IPCC reports and the underlying literature he found the problems quickly mounted.

Koonin first studied climate models almost 30 years ago as part of a team advising the U.S. government on the prospects for high-powered scientific computers to advance climate prediction. He describes the way the models organize the physical layout of the atmosphere and oceans and the resulting proliferation of processes that need to be explained. The first problem is that many key phenomena, such as cloud formation, involve processes that are simply unknown. So modelers have to make educated guesses about what goes on. The next problem is that many processes are known but take place on too small a scale for models to be able to compute them all in a reasonable amount of time. So again, modelers resort to approximations. Then a further problem arises that to initialize the model requires detailed information about the history of the oceans and atmosphere and such data simply don’t exist. So… approximations again.

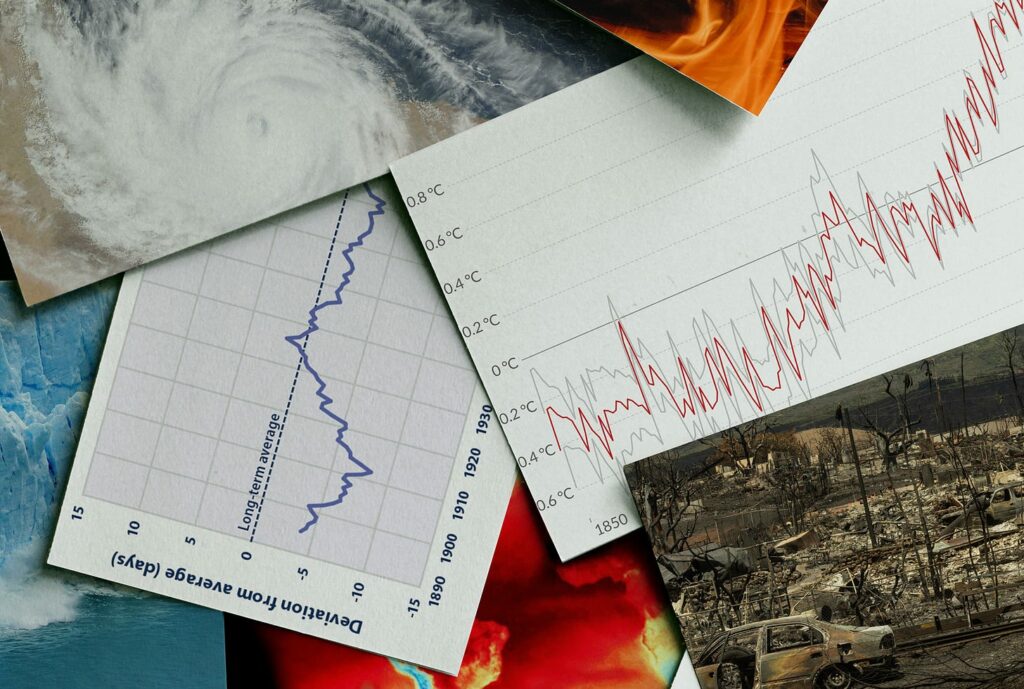

All the shortcuts would not be a problem if in the end they could accurately predict the climate. But here we run into another big issue. The models on average do poorly at reproducing the 20th century warming pattern, even though modelers can look at the answer and tune the models to try and reproduce it. They don’t warm enough from 1910 to 1940 and they warm too much after 1980. Both errors point to the likelihood that they depend too much on sensitivity to carbon dioxide and don’t account for long-term natural variations.

Koonin sticks the blade in even further by showing evidence that the most recent generation of climate models (called CMIP6) are even more spread out in their predictions, and do even worse at reproducing the past, than did the last generation of models. This is the opposite of what you’d expect in science. But it is consistent with what would happen if modelers are trying too hard to build in a predetermined answer.

If this claim is true, some readers might object, then scientists would know about it and we’d hear about it. But a repeated theme in Koonin’s book is the contrast between what the insiders know and discuss among themselves, versus what gets told to the public. Koonin gives an example of a recent report from the US National Academy of Sciences on “geoengineering” – which refers to modifying the reflectivity (“albedo”) of the planet’s surface to cool the atmosphere. The National Academy cautioned that the state of climate modeling and the uncertainties around albedo cooling make it impossible to provide reliable assessments of the risks and consequences of geoengineering. But, as Koonin points out, the exact same limitations apply to climate modeling of the effects of greenhouse gases, for all the same reasons, yet we are never told that by official science organizations. Instead scientists and journalists alike repeatedly present model simulations as if they are reliable forecasts of the future.

Next week: Hyping Heat.

I could not agree more with Professor Koonin. Nature will quickly kill the arrogance of the climate alarmsists With evidence.. they have forgotten that CO2 is food for plants, increases the growth rate of the dominant life on earth so that WE may eat and breathe!

I loved the final point. The academy does not approve of geo engineering due to inaccuracies and uncertainties in the models, but blindly supports the projected temperature increases using models with exact same inaccuracies and uncertainties. You can’t make this stuff up.

Many years ago as a young physics graduate I was proudly discussing a computer simulation of something or other that I had done when a rather senior scientist squashed me flat by remarking "Ah, computers - second rate substitutes for thought".

Well, apparently you can make this stuff up and it is believed with the absolute certainty of the Second Coming.